Chapter 5: Q63E (page 241)

Refer to Exercise.

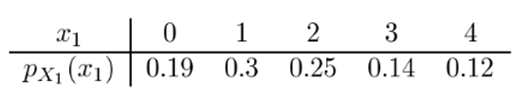

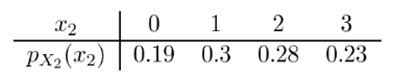

a. Calculate the covariance between \({X_1} = \)the number of customers in the express checkout and\({X_2} = \)the number of customers in the superexpress checkout.

b. Calculate\(V\left( {{X_1} + {X_2}} \right)\). How does this compare to\(V\left( {{X_1}} \right) + V\left( {{X_2}} \right)\)?

Short Answer

\(\begin{array}{l}{\rm{ a}}{\rm{. }}{\mathop{\rm Cov}\nolimits} \left( {{X_1},{X_2}} \right) = 0.695{\rm{;}}\\{\rm{ b}}{\rm{. }}V\left( {{X_1} + {X_2}} \right) = 4.0675.{\rm{ }}\end{array}\)