Chapter 12: Q7SE (page 850)

In Sec. 10.2, we discussed \({\chi ^2}\) goodness-of-fit tests for composite hypotheses. These tests required computing M.L.E.'s based on the numbers of observations that fell into the different intervals used for the test. Suppose instead that we use the M.L.E.'s based on the original observations. In this case, we claimed that the asymptotic distribution of the \({x^2}\) test statistic was somewhere between two different \({\chi ^2}\) distributions. We can use simulation to better approximate the distribution of the test statistic. In this exercise, assume that we are trying to test the same hypotheses as in Example 10.2.5, although the methods will apply in all such cases.

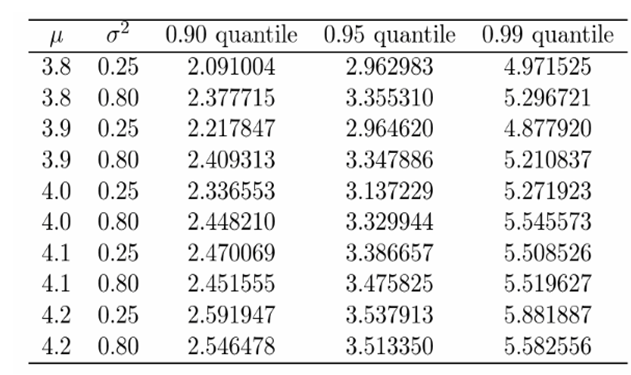

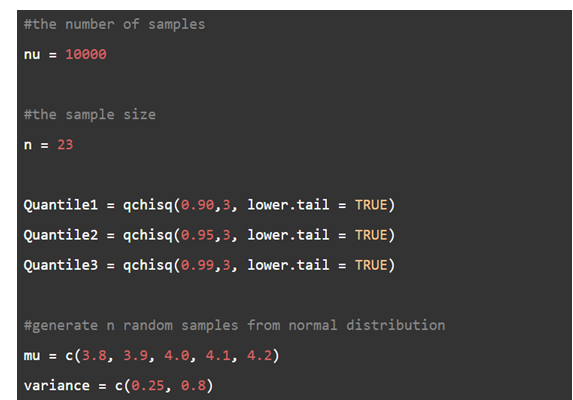

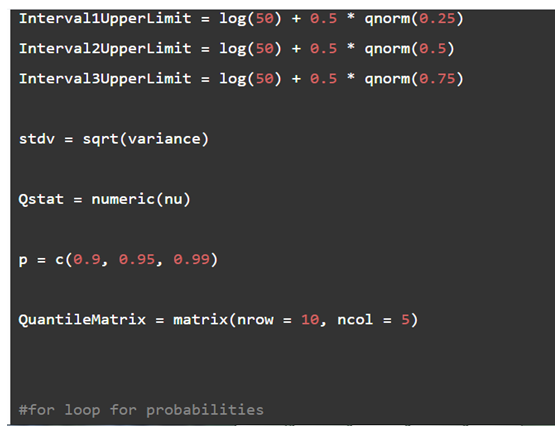

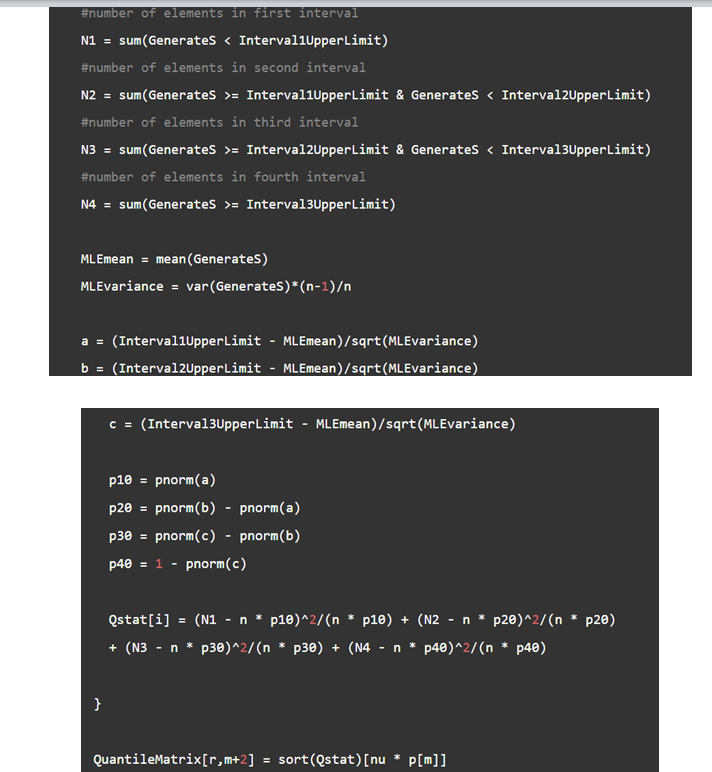

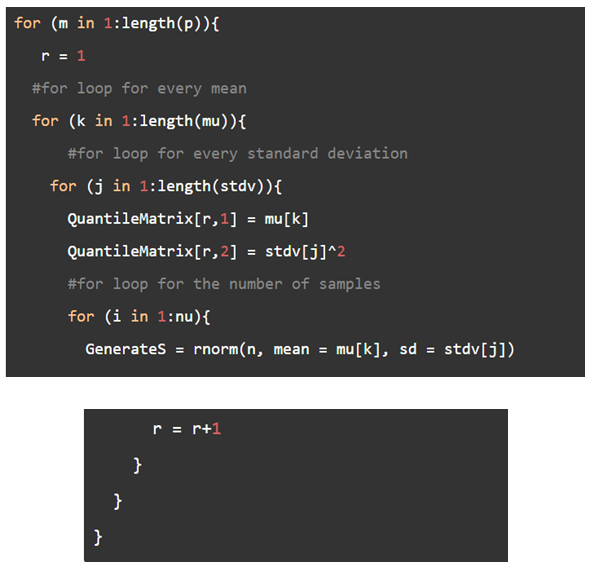

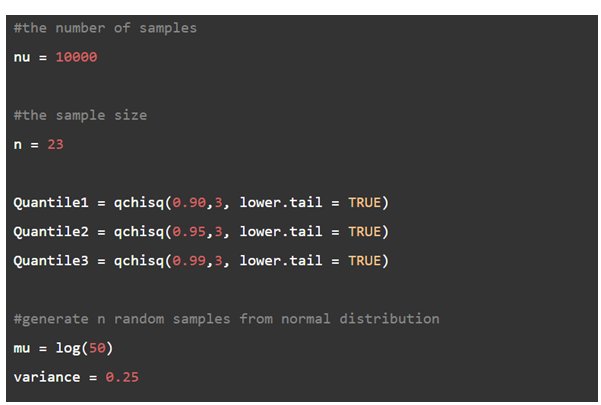

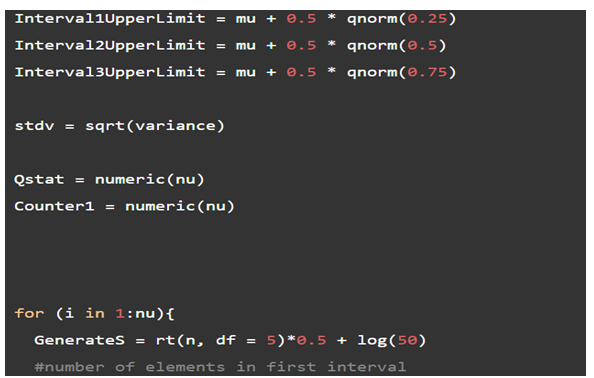

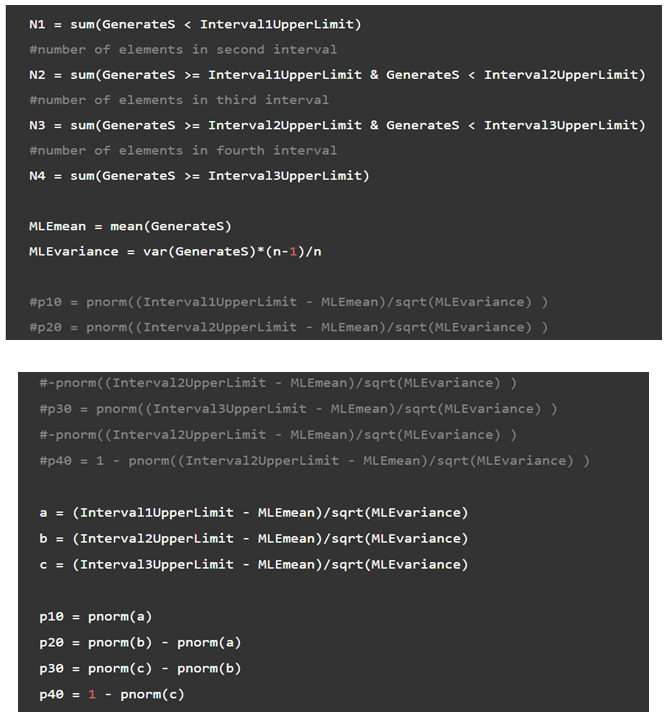

a. Simulate \(v = 1000\) samples of size \(n = 23\) from each of 10 different normal distributions. Let the normal distributions have means of \(3.8,3.9,4.0,4.1,\) and \(4.2\) Let the distributions have variances of 0.25 and 0.8. Use all 10 combinations of mean and variance. For each simulated sample, compute the \({\chi ^2}\) statistic Q using the usual M.L.E.'s of \(\mu \) , and \({\sigma ^2}.\) For each of the 10 normal distributions, estimate the 0.9,0.95, and 0.99 quantiles of the distribution of Q.

b. Do the quantiles change much as the distribution of the data changes?

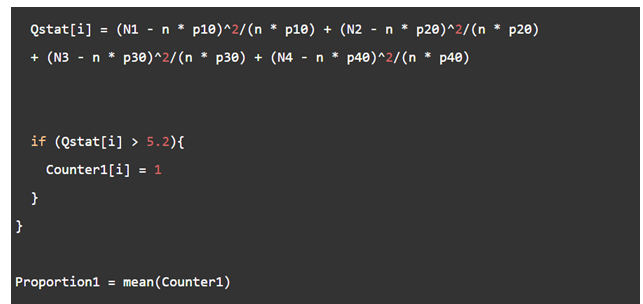

c. Consider the test that rejects the null hypothesis if \(Q \ge 5.2.\) Use simulation to estimate the power function of this test at the following alternative: For each \(i,\left( {{X_i} - 3.912} \right)/0.5\) has the t distribution with five degrees of freedom.

Short Answer

(a) There are a total of 30 estimates.

(b) There are changes. They do not seem to be huge.

(c) Varies around 0.0357.