Chapter 6: Q6.2-34E (page 331)

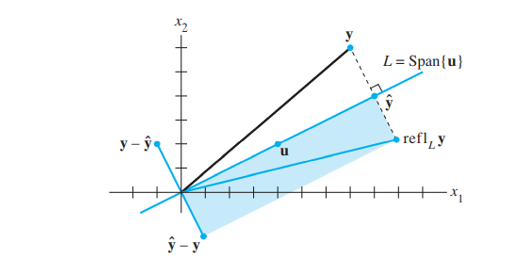

Question: Given \({\bf{u}} \ne {\bf{0}}\) in \({\mathbb{R}^n}\), let \(L = {\bf{Span}}\left\{ {\bf{u}} \right\}\). For y in \({\mathbb{R}^n}\), the reflection of y in L is the point \({\bf{ref}}{{\bf{l}}_L}y\) defined by

\({\bf{ref}}{{\bf{l}}_L}{\bf{y}} = {\bf{2}} \cdot {\bf{pro}}{{\bf{j}}_L}{\bf{y}} - {\bf{y}}\)

See the figure, which shows that \({\bf{ref}}{{\bf{l}}_L}{\bf{y}}\) is the sum of \({\bf{\hat y}} = {\bf{pro}}{{\bf{j}}_L}{\bf{y}}\) and \(\widehat {\bf{y}} - {\bf{y}}\). Show that the mapping \({\bf{y}} \mapsto {\bf{ref}}{{\bf{l}}_L}{\bf{y}}\) is a linear transformation.

Short Answer

It is proved that the mapping \({\bf{y}} \mapsto {\rm{ref}}{{\rm{l}}_L}{\bf{y}}\) is a linear transformation.